High Authority Social Bookmarking Site for US SEO in 2026 - A2Bookmarks USA

Welcome to A2Bookmarks USA, your leading social bookmarking site designed for immediate digital impact across the United States. Our platform provides a powerful, specialized network that enables users to instantly share, organize, and elevate their most valuable web resources. As a premier choice among US social bookmarking sites in 2026, A2Bookmarks USA is engineered to maximize your content's shelf life, search engine indexing, and organic discoverability. Entrepreneurs, marketers, and creators rely on our platform to secure authoritative, geo-targeted backlinks that build lasting domain strength. Streamline your content strategy, connect with an engaged American audience, and leverage data-driven bookmarking features tailored for competitive U.S. markets. Gain the visibility advantage and accelerate your SEO results with a platform built specifically for the American digital landscape. Join A2Bookmarks USA and start building your authoritative link profile today.

Prevent Hallucinations in LLM: Best Practices for 2026 anavcloudsanalytics.ai

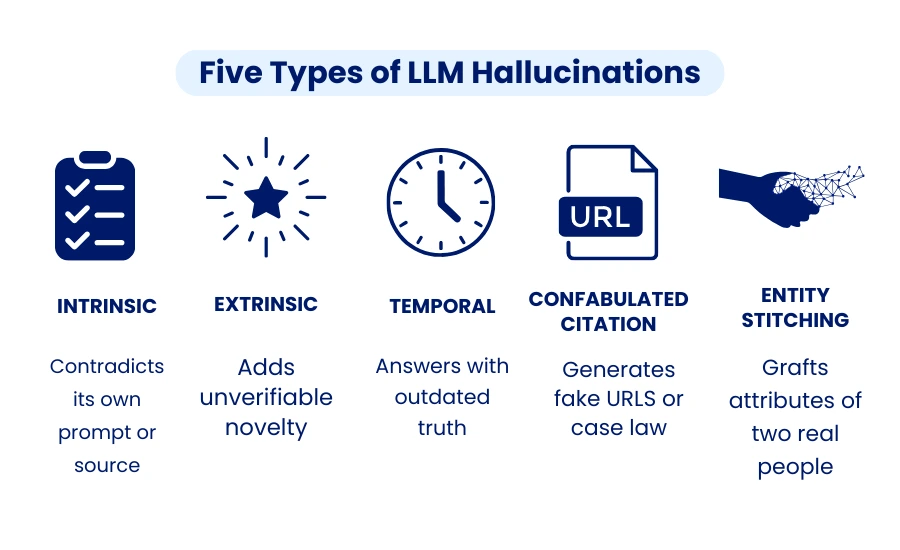

The foundation of contemporary conversational AI development services is now Large Language Models (LLMs). LLMs aid companies in providing quicker, more individualized interactions at scale, from chatbots for customer service to corporate assistants with CRM integration. Hallucinations, or answers that sound certain but are inaccurate or deceptive, are still a recurrent problem.

Preventing hallucinations in LLMs is mandatory as of 2026. Inaccurate responses from AI chatbots linked to CRMs, internal tools, or customer-facing platforms can undermine confidence, interfere with business processes, and raise compliance concerns. This blog examines the causes of hallucinations as well as the best tactics companies may use to create dependable, hallucination-free AI chatbots.

What Causes LLM Hallucinations?

The first step in prevention is to comprehend the underlying reasons. Typical causes include of:

Limitations of training data: LLMs learn from large datasets that could be inaccurate, out-of-date, or incomplete.

Lack of foundation: Models forecast text based on patterns rather than confirmed facts, which may result in false responses.

Unclear prompts or context: Vague inputs often cause over-generalization or incorrect assumptions.

Probabilistic inference: Token prediction can produce plausible but factually wrong outputs.

These challenges become more serious when LLMs are used in business-critical workflows such as sales, support, or analytics.

Proven Strategies to Prevent Hallucinations in LLM

1. Make use of Retrieval-Augmented Generation (RAG)

LLM replies are based on reliable, current data thanks to Retrieval-Augmented Generation. The system obtains pertinent data from validated sources, such as internal documents, company databases, or authorized knowledge bases, before producing a response.

RAG has proven to reduce hallucination rates by 40–70% in real-world applications. For businesses using conversational AI development services, RAG ensures chatbot responses remain accurate even as data and policies change—making it essential for CRM-integrated chatbots.

2. Adjust Models Using Domain-Specific Information

Generic LLMs don’t have a thorough grasp of certain businesses or industries. Accuracy is greatly increased by fine-tuning models with carefully chosen, superior, domain-specific data—such as FAQs, manuals, or CRM information.

In 2026, noise-robust fine-tuning techniques help models handle ambiguous or incomplete inputs without fabricating answers. This is especially valuable for enterprise assistants and customer support bots where precision matters.

3. Add Reasoning, Verification & Human Oversight

High-risk applications require multiple safety layers:

Reasoning prompts (such as structured reasoning or step-by-step logic) help models produce more grounded answers.

In post-generation validation, outputs are cross-checked against reliable sources using secondary models or rules.

In regulated industries like finance, healthcare, or legal services, human-in-the-loop assessment is still crucial.

Automated hallucination detectors that identify inconsistent or unsupported outputs before consumers see them are among the emerging advancements for 2026.

4. Loops for Constant Monitoring and Feedback

Preventing hallucinations is a continuous process. Companies need to keep an eye on chatbot exchanges, record mistakes, and gather user input. These discoveries aid in the proactive retraining of models and the identification of new knowledge gaps.

As corporate data, client behavior, and market conditions change, ongoing monitoring makes sure that AI chatbot integration with CRM stays dependable.

5. Regular Model Maintenance and Knowledge Updates

Even well-trained LLMs degrade without maintenance. Regularly updating training data, retrieval sources, prompts, and inference parameters keeps responses accurate and aligned with current business knowledge.

Structural knowledge constraints—such as limiting responses to verified datasets—are particularly effective for enterprise and CRM-driven chatbots.

The Role of CRM Integration in Reducing Hallucinations

AI Chatbot Integration with CRM plays a critical role in hallucination prevention. By accessing verified, real-time customer and business data, chatbots deliver context-aware, factual responses. This reduces errors in sales, support, and lead management while enabling safe personalization at scale.

Conclusion

LLM hallucinations pose real risks—but they are manageable. By combining Retrieval-Augmented Generation, domain-specific fine-tuning, reasoning and verification layers, continuous monitoring, and CRM integration, businesses can significantly prevent hallucinations in LLMs.

In 2026, the most successful AI chatbots won’t just be smarter—they’ll be trustworthy, scalable, and enterprise-ready. At AnavClouds Analytics.ai, we help organizations build hallucination-free AI chatbot solutions that integrate seamlessly with CRM and business systems, delivering accuracy you can rely on.

Source: https://www.anavcloudsanalytics.ai/blog/prevent-hallucinations-in-llm/